Close

For biopharma leaders looking to transform their organizations at scale by adopting digital and GenAI solutions.

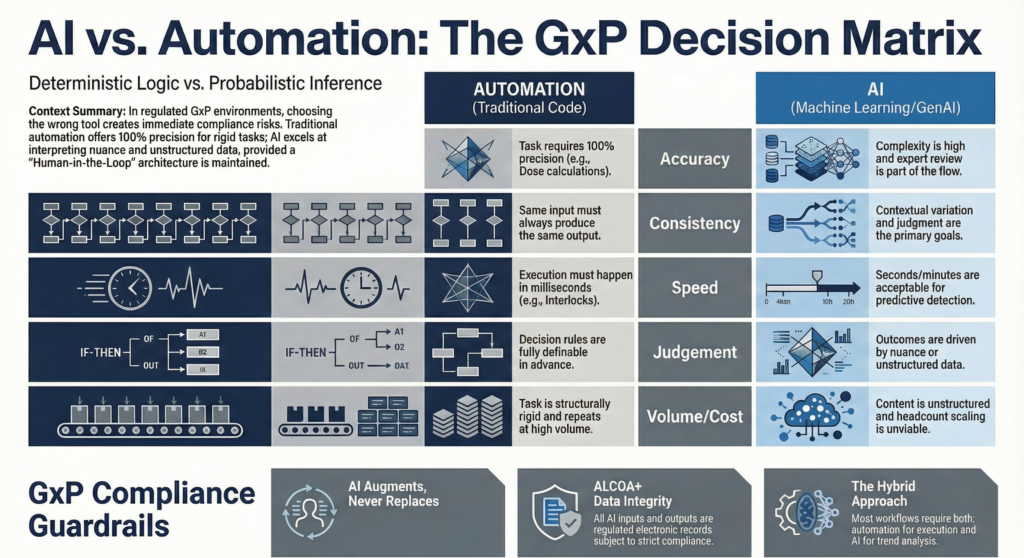

Don’t know when to use AI vs automation, here are five categories to help you decide:

Pharma 4.0 is no longer a future-state conversation. It is here. In a GxP environment, though, knowing when not to use AI matters just as much as knowing where it can create value.

Using AI on the wrong task does not just waste money. It can create compliance risk, weaken confidence in the output, and introduce problems that only become visible during an inspection, a deviation investigation, or an audit trail review.

So how do you choose between traditional rule-based automation and Artificial Intelligence?

For biopharma operations, the decision usually comes down to five things: accuracy, consistency, speed, judgment, and scale. Work through those five clearly and the answer gets easier.

Here is a 5-point framework tailored for biopharma operations:

In GxP, close enough is not good enough.

A 95% success rate may sound acceptable in other settings. In biopharma, that remaining 5% can become a batch disposition issue, a dosing issue, a financial control issue, or a patient safety issue. Tasks such as batch release calculations, dose calculations, label reconciliation, and payroll processing need rule-based automation, not AI.

Automation follows defined logic. It performs the same calculation every time and produces an output that can be tested, verified, and validated. That is what these workflows require.

AI has a role, but not as the final decision-maker in precision-critical tasks. If AI-generated input or output becomes part of a regulated process, it can become part of the regulated record. At that point, the burden shifts fast. Attribution, accuracy, contemporaneity, and integrity all matter. Most organizations are not set up to manage that level of control if AI is sitting too close to the point of decision.

AI works better one step back. It can organize information, surface risk, and accelerate review while a qualified human remains accountable for the decision.

The snap judgment: If accuracy must be 100% –> AUTOMATION

Same input must always produce same output?

Millisecond execution?

Interpreting context and ambiguity in data?

High volume / rigid structure?

In GxP, the AI vs automation decision isn’t philosophical. It has a compliance cost when you get it wrong.

One thing that is easy to overlook: any AI input or output in a GxP process is a regulated electronic record.

Some workflows are not just about getting to the right answer. They are about getting there the exact same way every time.

That is how SOP-driven execution works. If the same input must produce the same output without variation, traditional automation is the better fit. Code handles that well because the rules are explicit, stable, and testable. In regulated operations, consistency is not a nice-to-have. It is often the expectation.

AI behaves differently. Even when performance is strong, outputs can vary. Wording changes. Context changes. Models change. Drift happens. That variability makes AI useful in some settings and hard to defend in others.

Regulated environments favor explainability. Teams need to understand why a decision was made, how an output was produced, and whether the same logic will hold next week, next quarter, and during an inspection. Fixed code is much easier to validate and defend than a probabilistic model.

AI is more useful when variation is part of the job. Reviewing narrative deviation reports, identifying themes across CAPAs, or tracing the likely impact of a change control across a large document set all require interpretation, not just repetition.

The snap judgement: If the exact same input must yield the exact same output ➔ AUTOMATION

Some decisions have to happen immediately. There is no room for delay.

Equipment interlocks, closed-loop controls, dose delivery systems, and certain forms of real-time process monitoring need instant, predictable execution. Milliseconds matter. Those workflows belong in automation.

AI introduces inference time, infrastructure dependencies, and output variability. Even when the delay is small, it is still delay. For hard real-time control, that is the wrong tradeoff.

AI helps in a different way. It helps teams see a problem sooner.

It can monitor telemetry, sensor streams, historian data, and process trends at a scale no human team can match. It can detect weak signals early and flag a likely issue before a deviation is triggered, before a parameter moves out of range, or before an equipment problem becomes a production event.

That kind of speed matters too. Not execution speed. Detection speed.

One controls the process. The other improves awareness around the process. Those are different jobs and they should not be confused.

The snap judgement: If the response must be immediate and deterministic -> AUTOMATION. If the goal is earlier detection or prediction -> AI.

Automation works well when every rule can be defined up front.

That falls apart when the work involves unstructured text, incomplete information, nuance, and ambiguity. Biopharma has plenty of that. Reviewing years of deviation data to identify recurring patterns, synthesizing clinical or regulatory content, summarizing inspection history, or drafting a first-pass response to a health authority question all require interpretation.

Rule-based logic struggles in those settings because the task is not just execution. It is judgment.

AI can help cut through that complexity. It can review more material, connect more signals, and produce a first pass faster than a human team working manually. That has real value.

It still should not replace the expert.

In regulated environments, human-in-the-loop is not a temporary control while the technology matures. It is the right operating model. AI can accelerate review and sharpen insight. The human expert still has to decide, approve, and stand behind the outcome.

Used correctly, AI does not remove judgment from the workflow. It pushes human judgment to the point where it matters most.

The snap judgement: If the task requires interpreting context and ambiguity -> AI with human review

A lot of AI business cases look good in pilot mode. Volume changes the math.

As usage scales, token costs grow, infrastructure costs grow, and the economics can turn quickly. For high-volume, repetitive, rules-based tasks, traditional automation is usually the better choice. It is cheaper to run, easier to maintain, and easier to validate.

Many operational tasks in biopharma fit that profile. They are large in volume and rigid in structure. In those cases, using AI is not advanced. It is unnecessary.

Scale becomes more interesting when the content itself is highly variable. Extracting data from diverse Certificates of Analysis, reviewing supplier documentation, organizing inspection narratives, or generating first-pass dossier content across a growing portfolio all create a different problem. The volume is high, but the structure is inconsistent. Exceptions pile up. Manual effort becomes the only thing keeping the process moving.

AI can help absorb that variability without forcing the organization to solve growth by adding more people every cycle.

Volume alone is not the question. Variability inside the volume is what matters.

The snap judgement: If the task is repetitive, rigid, and high volume -> AUTOMATION. If highly variable -> AI

Most GxP workflows do not sit neatly in one bucket.

A batch release workflow may require deterministic automation for calculations, approvals, and system controls. The deviation trend analysis behind that workflow may benefit from AI. The final summary may still need a human to review the output, apply judgment, and close the loop.

That is usually the right answer. Not AI alone. Not automation alone. A deliberate combination of both.

The teams that handle this well do not start with the technology. They start with the task. They ask a few basic questions up front:

That discipline matters more than enthusiasm. Most weak AI strategies start by chasing the tool. Strong operating models start by understanding the work.

Pharma 4.0 does not require organizations to choose between AI and automation. It requires them to know the difference.

Some workflows need deterministic control. Some need contextual interpretation. Many need both, with governance built around the points where one hands off to the other.

As FDA and EMA expectations around AI in the product lifecycle continue to evolve, one principle should stay fixed:

If we cannot govern its judgment, explain its output, and protect the integrity of the data around it, AI will scale compliance risk faster than it scales capability.

The organizations getting this right are doing the hard thinking before the pilot starts. They are mapping the nature of the work, assigning the right technology to the right task, and building governance into the design instead of trying to bolt it on later.

That is the difference between a serious operating model and a pilot that never should have made it out of the room.

Kofi A. Kumi where he helps biopharma leaders design transformations that last. With a foundation in chemistry and a career in enterprise systems, he bridges strategy and execution across regulatory, quality, and clinical operations. His work spans top-5 pharma, mid-sized biotechs, and pre-commercial innovators, leading initiatives that have reduced procedural complexity by 80%, drove inspection readiness, and reshaped global operating models.

T: +1 602-301-1314

kofi.kumi@scimitar.com